Just before the launch of Gemma 4 AI, the landscape of artificial intelligence was rapidly evolving, with increasing demand for models that could support diverse applications and languages. On the launch date, Google DeepMind unveiled Gemma 4, a family of state-of-the-art open models that aim to redefine the capabilities of AI.

Gemma 4 supports over 140 languages, making it one of the most versatile AI models available today. This extensive language support is crucial for developers looking to create applications that cater to a global audience.

Available under the Apache 2.0 license, Gemma 4 encourages innovation and collaboration within the developer community. Its architecture enables multi-step planning, autonomous action, offline code generation, and audio-visual processing, which are essential for creating sophisticated AI applications.

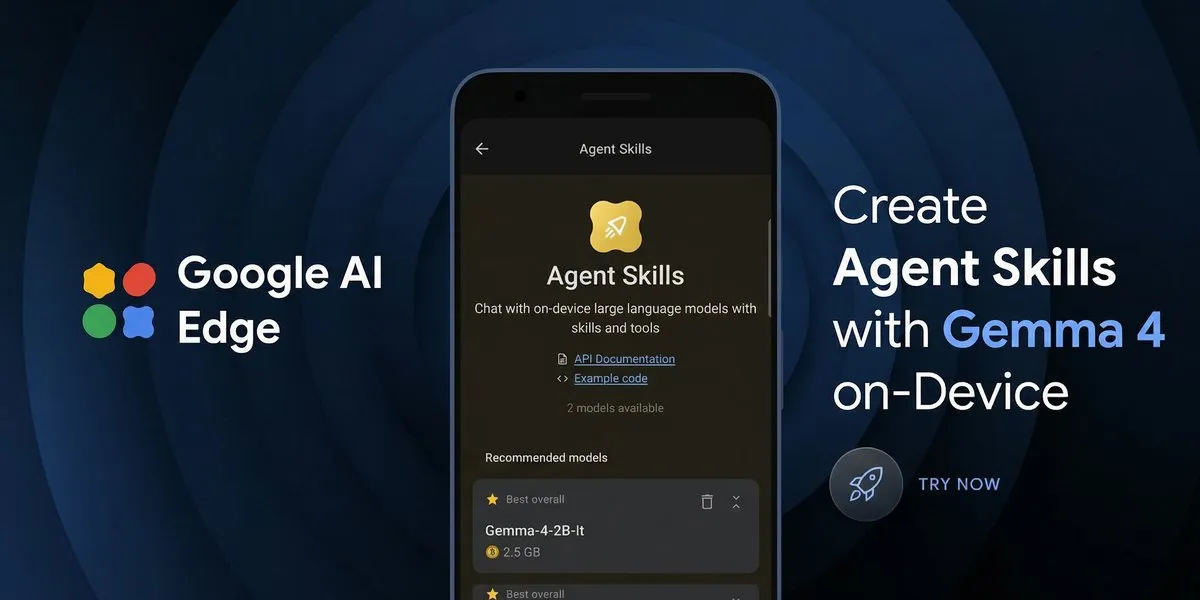

Notably, the models can run on various devices, including mobile, desktop, IoT, and robotics, ensuring accessibility for a wide range of users. The 128K context window allows for processing long-form content efficiently, enhancing the user experience.

The E2B and E4B models of Gemma 4 support native audio input for speech recognition, further expanding its functionality. Additionally, the model can achieve a prefill throughput of 133 tokens per second on a Raspberry Pi 5, showcasing its efficiency even on lower-end hardware.

Gemma 4 is optimized for fine-tuning on hardware ranging from billions of Android devices to developer workstations. The models include 26B and 31B versions, tailored for specific hardware requirements, with the 26B Mixture of Experts model utilizing 3.8 billion active parameters during inference.

Developers are now able to build autonomous agents that can interact with different tools and APIs, marking a significant advancement in AI capabilities. As stated by Google DeepMind, “The era of agentic experiences on-device is here, and we hope you are excited to start building on the edge.” This sentiment reflects the potential impact of Gemma 4 on future AI development.

Moreover, Gemma 4 supports high-quality offline code generation, effectively turning workstations into local-first AI code assistants. This feature is particularly beneficial for developers seeking to enhance productivity without relying on constant internet access.

As the technology continues to evolve, the implications of Gemma 4 AI are significant for developers and businesses alike. The ability to create powerful, accessible, and open AI solutions will likely drive innovation across various sectors.

Details remain unconfirmed regarding future updates or additional features, but the current capabilities of Gemma 4 AI position it as a leading solution in the AI landscape.